This is part six of my series on the 2025 AI Index. It's time to zoom out and ask: what are governments actually doing about any of this?

The answer: more than last year, but still not nearly enough.

From flashy investment pledges and landmark legislations to deepfake crackdowns, policymakers are starting to get serious about AI, or at least pretending to. But behind the big announcements lie glaring gaps, slow-moving regulatory systems, and a global legal patchwork that's nowhere close to keeping up with the pace of the technology.

Let's break it down.

1. Key Policy Milestones

2024 wasn't the year governments figured AI out, but it was the year they started trying in earnest.

From the first comprehensive AI law to unprecedented state-backed investments and new enforcement bodies, countries moved beyond vague principles and into the realm of actual policy. The scale and seriousness varied, but the message was clear: AI is now a matter of national and geopolitical strategy. Here's what mattered most.

EU AI Act Passed, March 2024

The European Parliament passed the AI Act in March 2024, creating the world's first comprehensive legal framework for AI. It uses a risk-based approach, banning social scoring and untargeted facial recognition, imposing strict rules on high-risk systems, and requiring transparency for general-purpose models. Most provisions take effect in 2026.

In my article on the AI Act, I argued that while critics call it overreaching, it strikes a vital balance between innovation and accountability. Like GDPR, the AI Act exemplifies the Brussels Effect, with Europe shaping global standards by regulating first and at scale. If implemented effectively, it could influence AI governance far beyond EU borders.

Abu Dhabi Launches MGX Fund, March 2024

In a bold move to stake a global claim in the AI race, Abu Dhabi launched MGX, a $100 billion investment firm focused on artificial intelligence and advanced technologies. Backed by the emirate's sovereign wealth, it serves as both an economic development tool and geopolitical signal.

MGX goes beyond investing in AI startups, aiming to build critical infrastructure, attract top research talent, and secure compute resources at scale. The UAE joins countries treating AI as a national asset class rather than just a tech sector.

UN Resolution on Trustworthy AI, March 2024

In a move signaling the seriousness of AI governance, the United Nations adopted its first-ever resolution on artificial intelligence. The text calls for AI that is "safe, secure and trustworthy," emphasizing human rights, sustainable development, and international cooperation. It encourages governments to share best practices and promote inclusive AI governance.

Like most UN resolutions, it carries no enforcement power. It's more a wish list than a rulebook, packed with phrases like "promote transparency" and "respect human rights." Still, it matters symbolically. When AI makes it into the UN's diplomatic vocabulary, the issue has clearly become mainstream.

UK AI Security Institute Releases Inspect Toolset, April 2024

The UK's AI Security Institute made a practical contribution to AI governance in April 2024 with Inspect, an open-source framework for evaluating large language models.

Inspect is written in Python and supports evaluations across providers including OpenAI, Anthropic, Google, Mistral, Hugging Face, and local models. It enables structured testing for dangerous capabilities, misuse potential, and alignment issues, with tools for prompt engineering, dialogue management, and automated grading.

In a space dominated by principles and press releases, Inspect stands out as an actual product, not a pledge. The UK signaled that public institutions can play a direct role in technical AI evaluation, not just in writing rules for others to interpret.

EU AI Office Launched, May 2024

Following the passage of the AI Act, the European Union launched the AI Office in May 2024. The office is led by Lucilla Sioli, a senior European Commission official known for her work on digital policy and AI regulation.

The AI Office enforces the AI Act, coordinates policy across member states, and supervises high-impact AI systems including general-purpose models. With over 140 experts, it acts as both regulator and facilitator, issuing codes of practice, overseeing risk management, and investigating non-compliance.

China Launches $47.5 Billion Semiconductor Fund, May 2024

On May 24, 2024, China launched the third phase of its China Integrated Circuit Industry Investment Fund (the Big Fund), totaling 344 billion yuan ($47.5 billion) in registered capital, making it the largest of three semiconductor funds established since 2014.

The move is part of China's strategy to achieve chip production self-sufficiency, but urgency has escalated due to ongoing U.S. export controls targeting advanced semiconductors and AI accelerators. This fund focuses on survival in a geopolitical tech standoff, emphasizing chip manufacturing equipment and backed by the Ministry of Finance and China's five biggest banks.

China's AI ambitions rest on compute, and compute rests on chips. This fund signals Beijing views AI hardware as a national security asset, not just an industry vertical.

California Governor Vetoes AI Safety Bill, October 2024

In October 2024, Governor Gavin Newsom vetoed SB 1047, halting the first U.S. AI safety legislation for frontier models. The bill targeted only extremely large systems costing over $100 million to train, requiring safety protocols, audits, and incident reporting.

Critics called the veto a missed opportunity for real accountability on powerful AI systems, warning that without safeguards, advanced models could enable cyberattacks, bioweapon development, or autonomous criminal behavior.

SB 1047 wasn't broadly restrictive, leaving ordinary development and research untouched. Its narrow requirements focused on catastrophic misuse. California, home to leading AI labs, had the chance to lead with meaningful regulation but deferred to industry comfort and federal inaction.

Saudi Arabia Announces Project Transcendence, November 2024

Saudi Arabia unveiled Project Transcendence, a $100 billion AI initiative to make the kingdom a global contender. The plan includes Arabic-language foundation models, national data infrastructure, and talent development.

Part of Vision 2030, the project positions AI as key to Saudi Arabia's geopolitical ambitions. Like its push into sports and entertainment, this aims to leapfrog into the global AI race's front ranks.

Details remain vague, but the scale signals AI is now a national asset, and Riyadh is all in.

Stargate Initiative Announced, January 2025

The United States unveiled the Stargate Initiative, a $500 billion private-sector plan to overhaul national AI infrastructure through data centers, compute clusters, and energy systems. The Trump-aligned project is led with characteristic flair.

Surprisingly, this isn't all smoke. Construction is underway in Abilene, Texas, with a 4 million square foot data center campus and 1.2 gigawatts of power. $100 billion is already deployed, with OpenAI, SoftBank, Oracle, and MGX as backers. OpenAI leads operations while SoftBank manages finances.

Doubts linger about the full $500 billion figure. As with all Trumpworld projects, the branding is loud, ambition towering, and execution remains to be seen.

France Announces €109B AI Investment, February 2025

At the AI Action Summit in Paris, France unveiled €109 billion in AI investments, the largest European commitment to date. Funding comes from public and private players including the UAE, Brookfield Asset Management, Fluidstack, Iliad, and Mistral AI, focusing on data centers, compute capacity, and foundational research.

President Macron framed it as France's answer to the U.S. Stargate project. France also launched INESIA, a new AI safety body, and backed the EU's €150 billion AI Champions Initiative.

The announcement signals a serious push to make France a global AI hub. Delivery is now the real test.

2. Governments Are Regulating, But Still Playing Catch-Up

AI regulation is no longer just a fringe topic. Governments around the world are drafting, debating, and in some cases enacting legislation to rein in AI's growing influence. But progress remains uneven, reactive, and often symbolic.

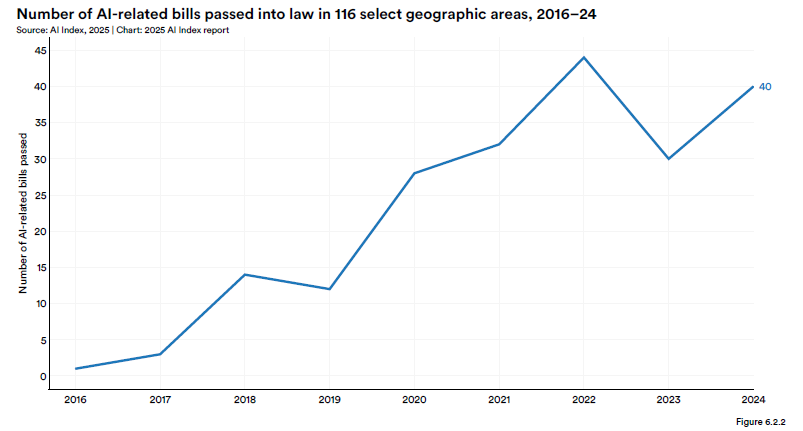

The next figure shows the growing number of AI-related legislative bills introduced and passed worldwide over the past few years.

The trendline is unmistakable: legislative interest in AI is accelerating. The United States leads in total bills passed, with a significant jump in state-level legislation in 2024. But keep in mind, volume does not necessarily mean substance.

Out of the 116 countries analyzed in the AI Index report. Only 39 of them have actually enacted any AI-related legislation. That means the majority of the world's governments are still operating in a legal vacuum when it comes to managing the risks posed by AI.

And those risks are mounting. As I discussed in Part 3 of this series, real-world incidents of AI misuse are rising, ranging from privacy violations and election manipulation to harmful deepfakes and model hallucinations in high-stakes domains like healthcare and law. Regulation is no longer optional, it is the baseline requirement for deploying these systems responsibly at scale.

The U.S., while still lacking federal AI regulation, is showing a particular legislative focus on deepfakes. Much of the attention is centered on AI-generated intimate imagery and political impersonations. In parallel, platforms like Character.ai have raised concerns about the increasingly blurred line between human/AI interactions. When a chatbot simulates real or fictional characters with emotional realism, the risk of psychological manipulation or misinformation becomes more than theoretical, it becomes personal.

Recent developments involving xAI's Grok image generator have raised concerns about the creation and dissemination of explicit deepfakes. The Grok image generator, integrated into Elon Musk's social media platform X, has been used by multiple X users to undress women. The issue of explicit deepfakes is not limited to Grok. The proliferation of such content highlights the urgent need for robust regulatory frameworks to address the ethical and legal implications of AI-generated deepfakes.