In my last article, I covered Chapter 7 of the AI Index, which focused on education.

It turned out to be a timely reminder: teaching people about AI is no longer optional. And not just in schools. If we want to live in a world where decisions about AI are made rationally, by voters, consumers, regulators, and CEOs, we need more than access to the tools. We need understanding.

That's what makes this next chapter, on public opinion, so important and so frustrating.

Public opinion shapes the real world. It influences policy, directs investment, and moves markets. When enough people believe that AI is either salvation or doom, someone somewhere is going to write a law or sign a check based on that belief. So the stakes are high. Which means, ideally, public opinion would be, well... informed.

Not paranoid. Not starry-eyed. Just grounded. Factual. Stoic, even. But as we'll see in this chapter, what people think they know about AI and what they actually do know are not always the same. And that gap matters.

1. Global Opinion: Confidence Without Clarity

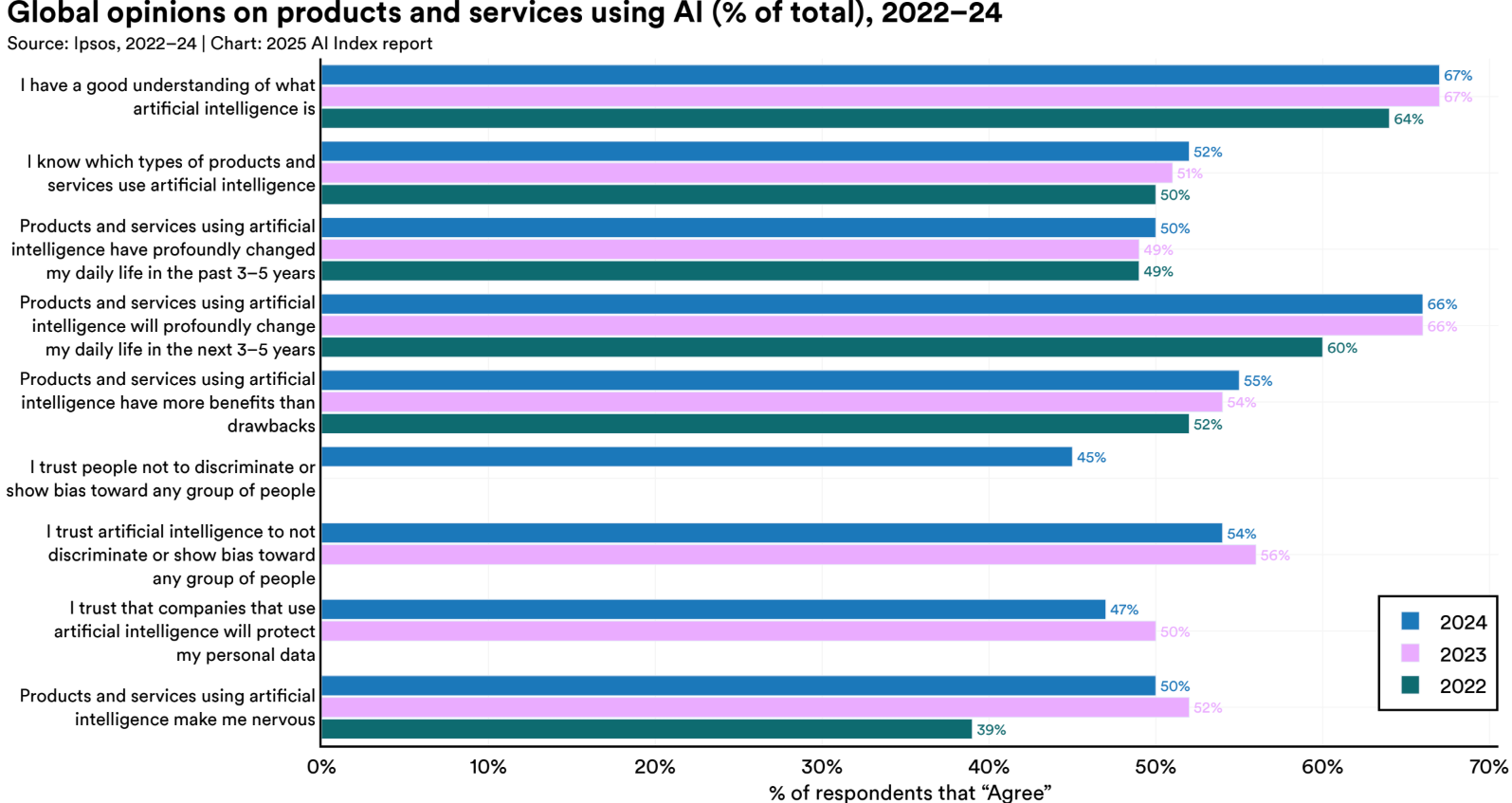

Let's start with the big picture. According to the 2025 AI Index, public sentiment around artificial intelligence is warm. A growing number of people believe AI will benefit society more than it will harm it. 55% now say AI-powered products and services have more benefits than drawbacks, up from 52% in 2022.

But there's a difference between optimism and informed optimism. And this data doesn't exactly scream "deep understanding."

The clearest example? 67% of people say they have a good understanding of what AI is. That is a bold claim. And one that is hard to believe if you have ever tried to explain how a large language model works to a room of professionals who use them daily. Most people are not lying. They are just mistaking familiarity for comprehension. They have seen AI in headlines, in product features, in their feeds. But that is not understanding. That is recognition.

As I mentioned in my last article, even people who work in the field sometimes bluff their way through conversations. If some AI professionals can't articulate what's under the hood, what exactly is the general public so confident about? Many people do not know enough about AI to realize that they do not understand it.

This lack of depth becomes even clearer when you look at who people are willing to trust. 47% of respondents say they trust companies that use AI to protect their personal data. Think about that for a second. Nearly half the population believes tech companies are good stewards of privacy.

Should we respect that opinion? Or better yet, should we respect companies like Meta? Well of course, I am sure Zuckerberg will go out of his way to respect users' data without regulation or public scrutiny.

This is the same company that brought us the Cambridge Analytica scandal, and whose CEO wants to let children discuss sex with AI chatbots. The same CEO who created FaceMash. As if social media had not already done enough to wreck adolescent well-being, now the plan is to wrap intimacy, manipulation, and AI novelty into one shiny new engagement tool. Not because it is good for teenagers. Because it is good for attention. Good for data. Good for ads. That is what public trust is rewarding. Not safety. Not ethics. Business models.

So when people say they trust AI companies to respect privacy, what they really mean is that they have not been paying attention.

And it does not stop there. 50% say they trust AI systems not to be biased. Oh boy! Again, this is a generous assessment. Discrimination in AI is not a side effect. It is a well-documented risk baked into many systems through the data they are trained on, the objectives they optimize for, and the incentives behind their deployment. That so many people think otherwise is not reassuring. It is revealing.

Still, the trends are headed in the right direction. Trust in companies protecting data is down slightly from last year. Trust in unbiased AI is slipping too. These are small shifts, but they suggest that some people are starting to look more critically at the systems shaping their lives. That is a good thing. A necessary thing.

At the same time, only 66% believe AI will profoundly change their lives in the next 3 to 5 years. Which means 1 in 3 people still expects things to stay more or less the same. That is a staggering disconnect. AI is already affecting what people see online, how they shop, how they work, and how they are judged, whether by algorithms in hiring, in finance, or in healthcare. Of course, even if AI is already deciding whether or not your medical insurance claim is denied, one would be crazy to think this has a profound impact on one's life. If you think it is not going to change your life, chances are it already has and you just did not notice.

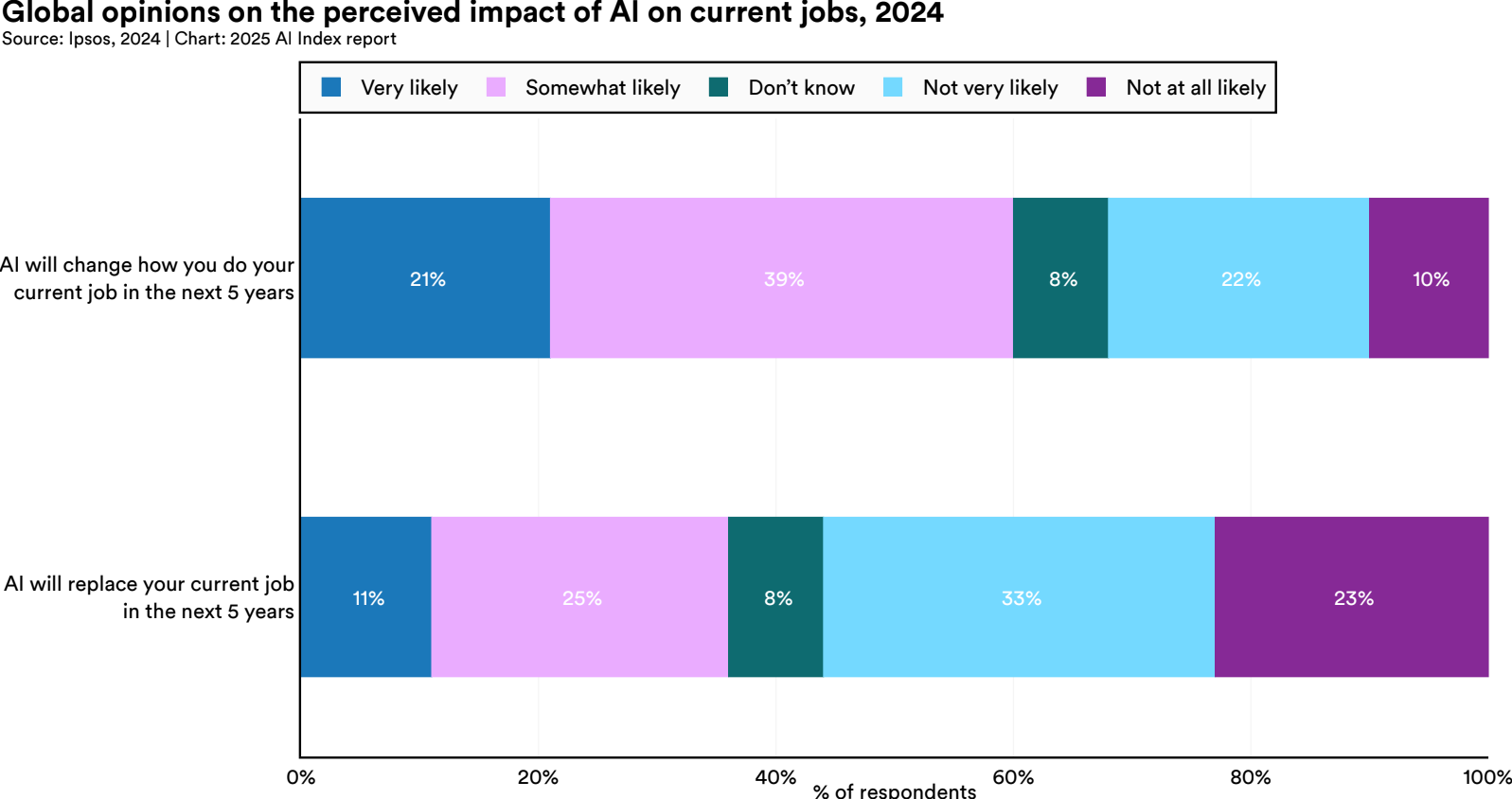

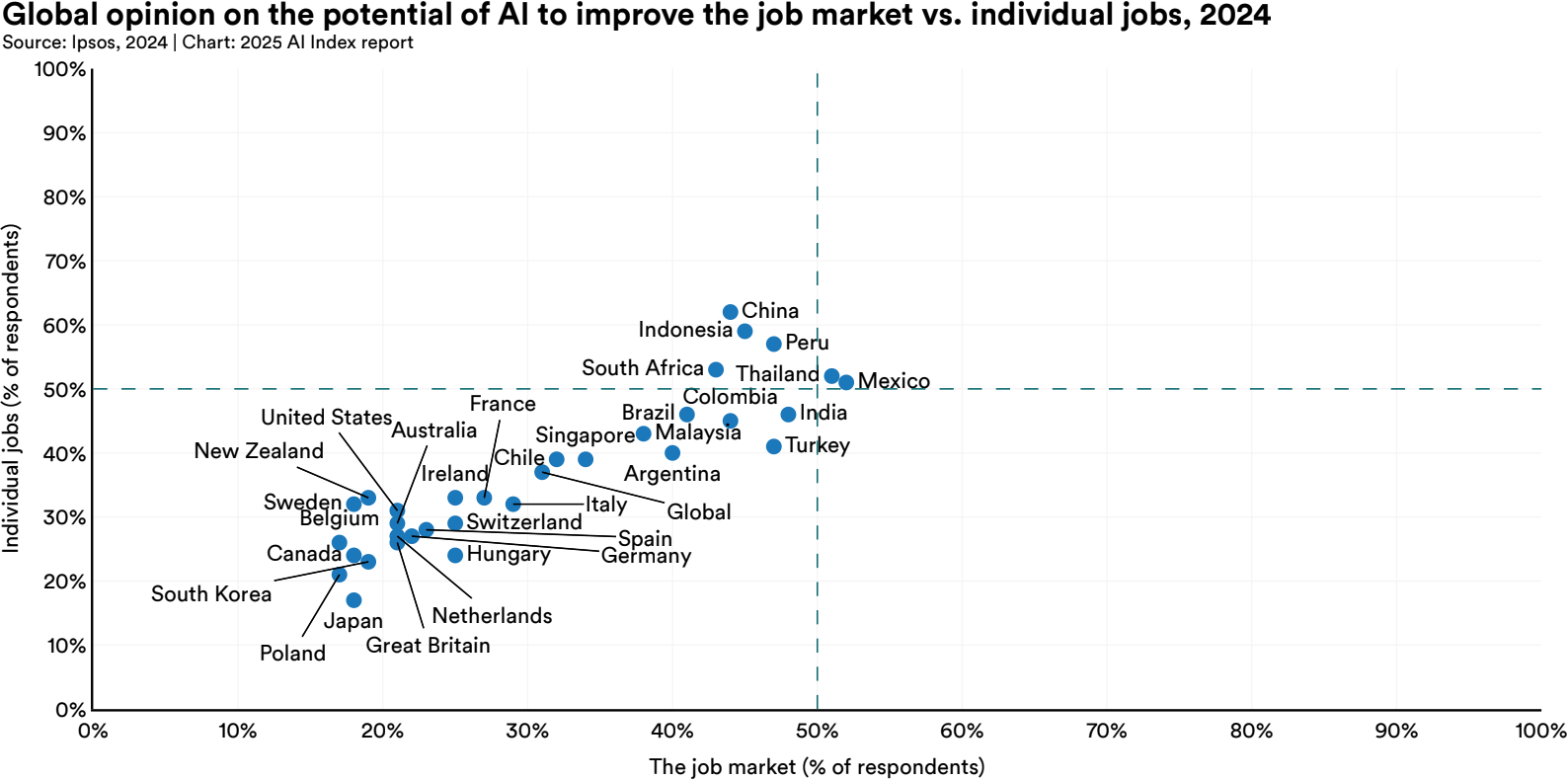

Regarding impact on the job market, concern about AI's impact on work is rising. 60% of people believe AI will change how they do their job in the next five years. 36% think it could replace their job entirely. Those are big numbers. But like the rest of this survey, they are hard to interpret. How many of these people are responding based on real risk? How many are reacting to news cycles, social media doomscrolling, or vague unease?

That lack of awareness does not come out of nowhere. Public opinion on AI is shaped by headlines, politics, culture, exposure, education, income, geography, lack of AI education, and a thousand other variables that have nothing to do with actual understanding. People are not being irrational. They are being human. But when that human response becomes the basis for policy, investment, and product design, it is worth questioning how grounded it really is.

We do not need public opinion to be technical. But we do need it to be informed. And right now, it is not.

2. Regional Differences: Optimism, Fear, and Culture

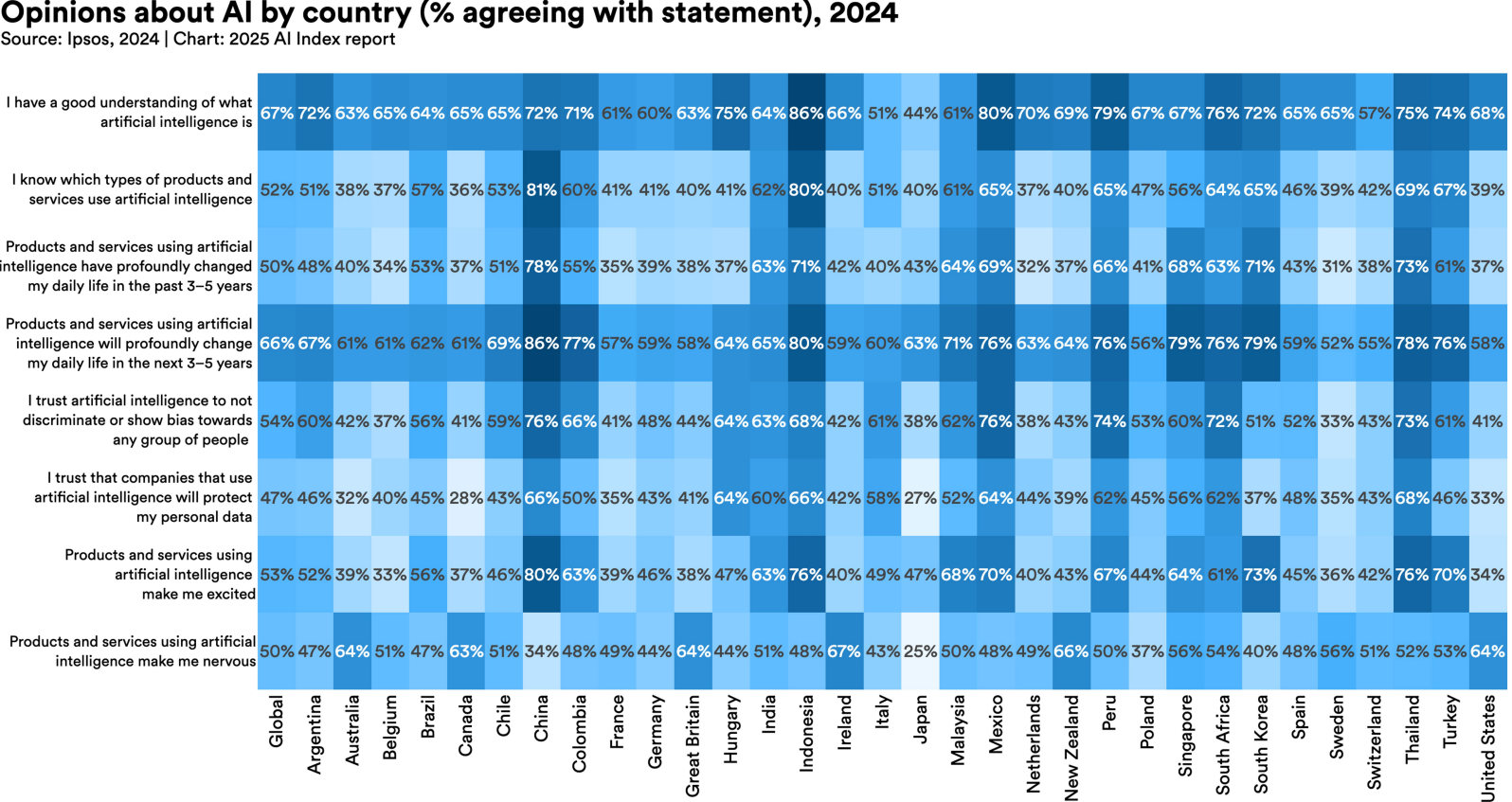

If public opinion on AI is inaccurate at the global level, it becomes even more so when you zoom in. Not every country sees AI the same way, and the differences are not just technical or economic. They are cultural.

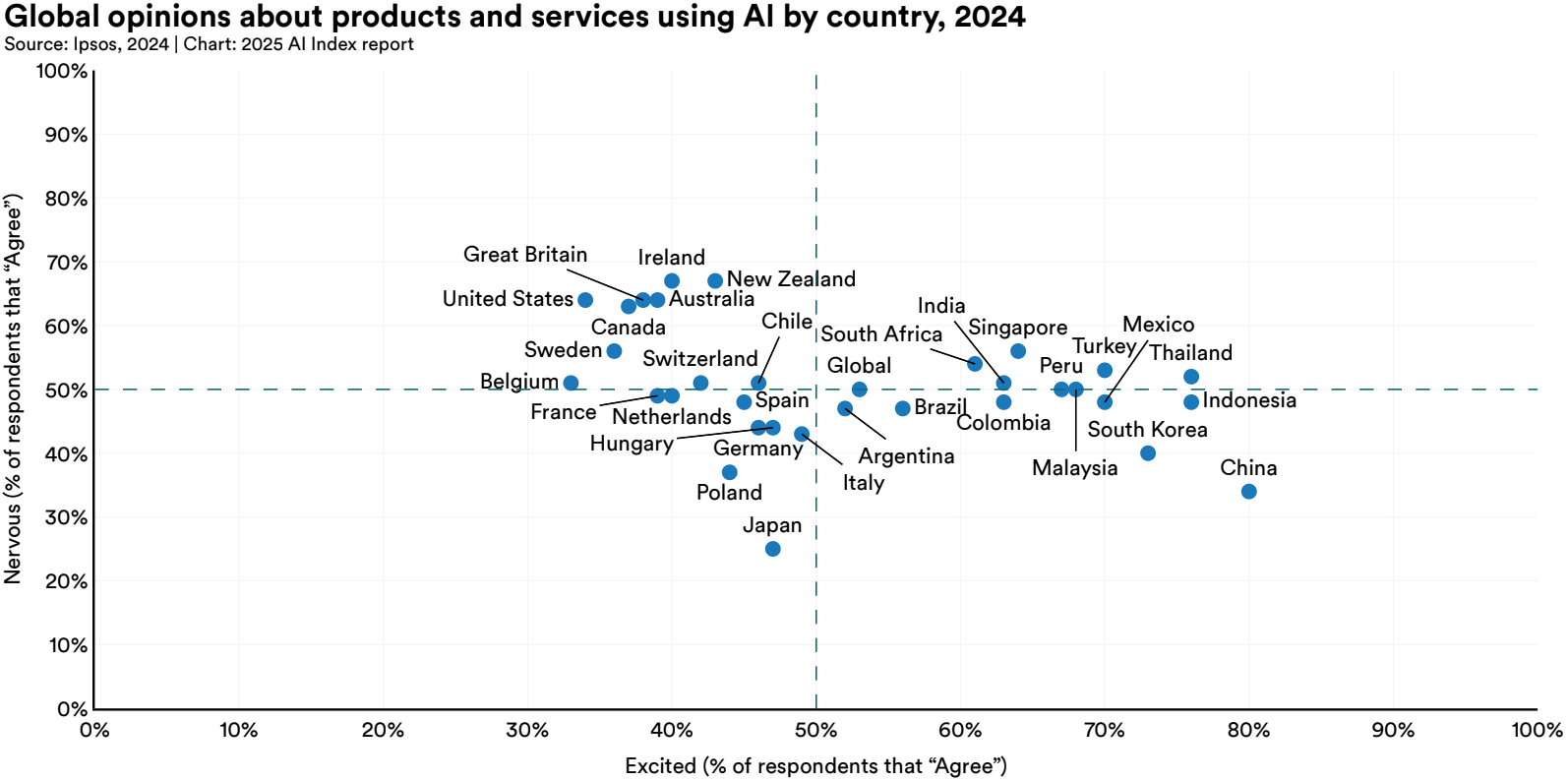

Some countries are overwhelmingly optimistic about AI. In China, 83% of people believe AI will do more good than harm. In Indonesia and Thailand, it is 80% and 77%. Meanwhile, in the United States, only 39% share that view. Canada sits at 40%, the Netherlands at 36%.

These differences are striking. But they do not necessarily reflect how much AI is being used. They seem to reflect how AI is being perceived. In some regions, AI is marketed as progress, innovation, a national strength. In others, it is wrapped up in debates about surveillance, labor displacement, ethics, and platform power. People are responding not to the models, but to the stories built around them.

There is a clear pattern here. In countries where people believe AI is already impacting their lives, or soon will, they are also more likely to view that impact as positive. That might seem intuitive, but it is not just a simple cause and effect. It could just as easily run in the other direction. If you already think AI is a good thing, you are probably more inclined to see it everywhere. Optimism does not just follow experience. Sometimes it filters it.

These judgements are shaped by education systems, news cycles, government messaging, corporate narratives, and social experience. That is why two people using the same product, with the same features, in different parts of the world, might come away with opposite opinions about whether it is good or dangerous.

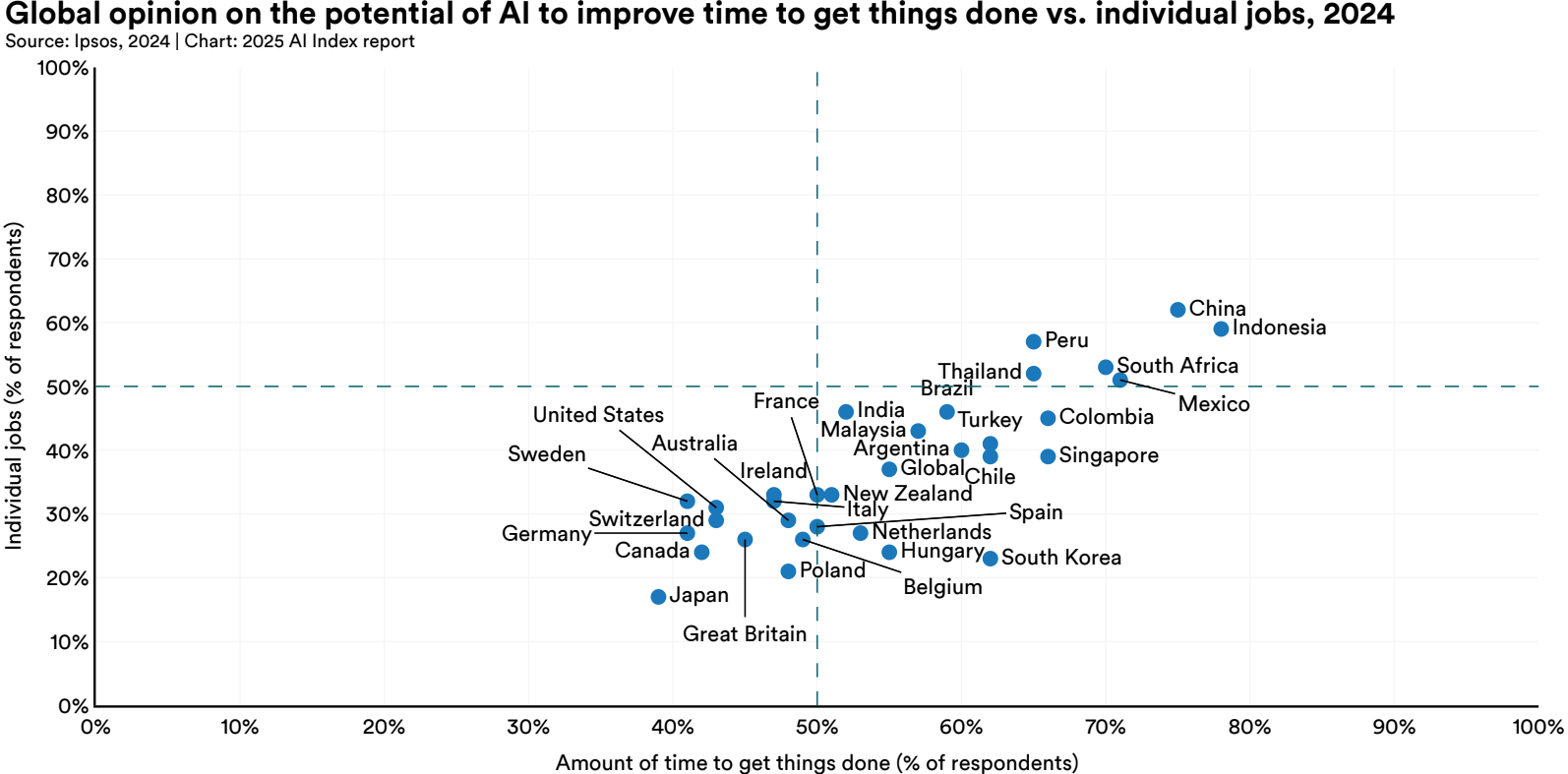

This shows up clearly when you look at perceived benefits. In China and Indonesia, most respondents believe AI will help improve the economy, save time, and enhance their jobs. In the United States, Belgium, and Sweden, those expectations are far lower. You could interpret that as realism, or you could interpret it as a deeper skepticism shaped by cultural context. It is not about who uses more AI. If anything, countries like China have more pervasive AI deployment in daily life, from payments to surveillance to education. But exposure does not guarantee criticism. In countries like the United States or Canada, skepticism might be less about how much AI is used and more about how loudly its risks are debated. The framing is different. So are the instincts.

None of this means one region is right and another is wrong. It just means perception is local. Which is exactly why global surveys can be so misleading if taken at face value. What looks like consensus is often just cultural echo.

3. Generational Divide: A Future Seen Through Different Lenses

The gap in opinion is not only global. It is generational.

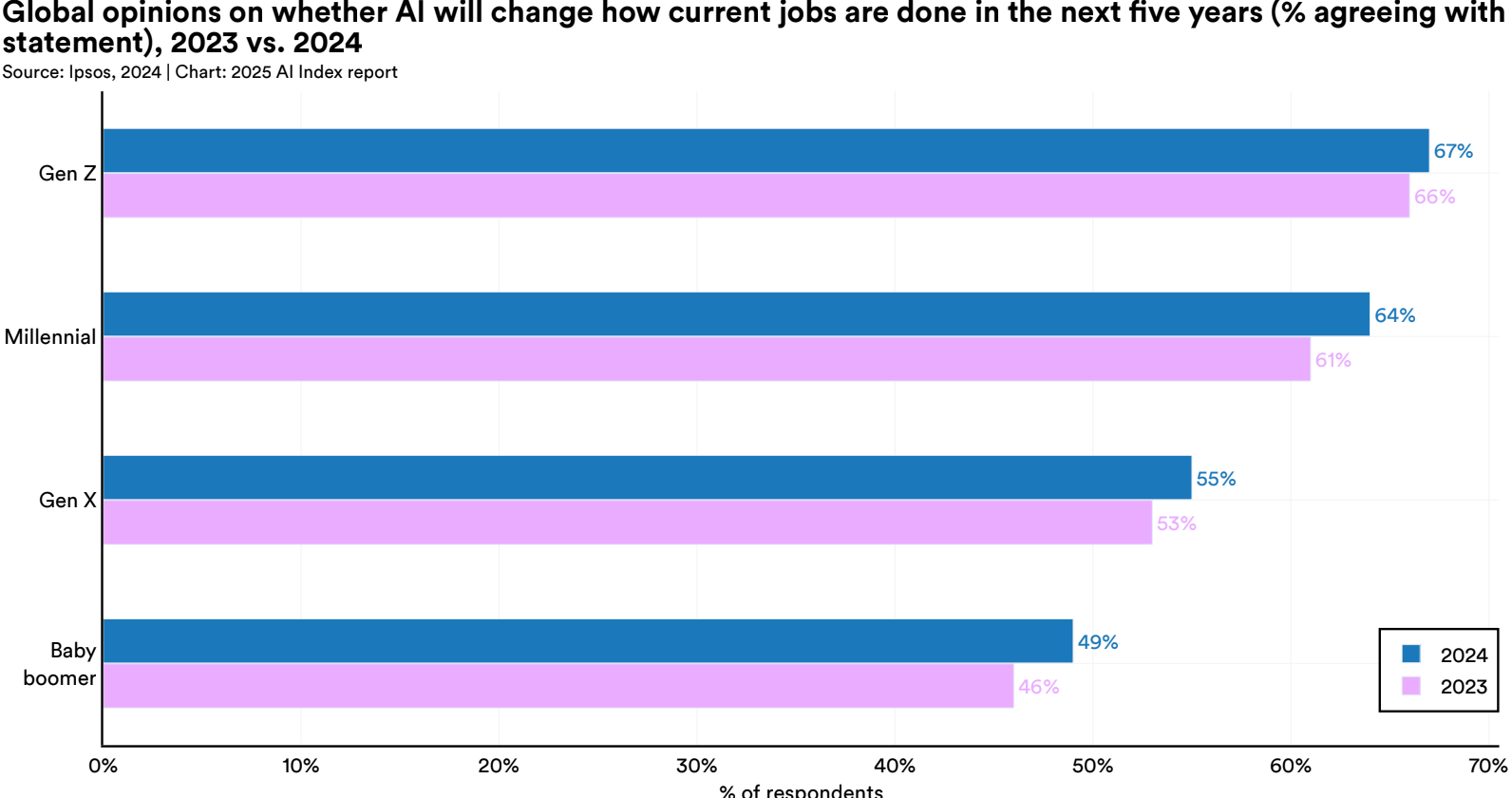

Younger people are far more likely to believe that AI will change the way they work. In 2024, 67% of Gen Z agreed that AI would alter how they do their jobs in the next five years. That number drops steadily with age. Only 49% of baby boomers said the same.

This difference makes sense. Younger generations have grown up immersed in digital ecosystems. They are used to algorithms influencing their daily routines, from what they watch and buy to how they communicate. To them, AI is not an abstract future technology. It is a practical and immediate force.

Older generations may not feel the same urgency. Some work in roles that are less likely to be reshaped by AI. Others have lived through enough technology hype cycles to know that not every promise lands. The result is a more cautious outlook, or at least a slower one.

Whether optimistic or cautious, both reactions are valid. But only one of them is preparing to adapt early. The other is waiting to see what happens.

Conclusion: Why Understanding Matters

AI is already here, embedded in the systems we use, the institutions we rely on, and the decisions being made about us and around us. And yet, public understanding of AI remains shallow. That disconnect matters.

Public opinion is not harmless background noise. It shapes regulation, investment, adoption, and resistance. When it is based on marketing gloss or media panic, the results are either overreaction or complacency. Neither is helpful. What we need is something much harder to cultivate: calm, factual, critical understanding.

The truth is, public opinion is shaped by many things that have little to do with facts or technical knowledge. These include:

- Media framing, especially sensationalism and simplified narratives

- Corporate marketing, often designed to oversell usefulness and downplay risk

- Political rhetoric, where AI becomes a symbol for either national power or social collapse

- Tech influencers and hype culture, who package speculation as insight

- Cultural narratives around progress, control, or disruption

- Peer influence, including workplace myths or casual online takes

- Personal exposure to specific tools, which may not reflect the technology as a whole

- Education gaps that leave people overconfident about what they think they know

None of this makes the public irrational. It makes them human. But it also makes clear why informed public discourse is so difficult to sustain. If AI is going to shape society, then society needs to actually understand what it is engaging with. That means calling out blind trust, questioning overconfidence, and building space for meaningful public education.

Understanding AI is a civic skill. And right now, the gap between public opinion and informed insight is too wide to ignore.