The EU's Artificial Intelligence Act has become an easy target for criticism, especially from those who haven't taken the time to read it. Instead of engaging with its actual content, many prefer to dismiss it as yet another bureaucratic overreach. But the truth is more complex. The AI Act isn't perfect, but it's a serious attempt to shape how AI develops in Europe in line with democratic values, human rights, and public trust.

Meanwhile, there are people doing the real work, developing thoughtful and innovative approaches to AI governance. Rather than shouting from the sidelines, they're showing how regulation can be used not as a brake but as a lever for ethical innovation and global leadership.

If we want innovation that lasts, we need regulation that makes sense. And the AI Act, for all the noise, does just that.

1. The AI Act isn't the problem, but ignorance is

I still hear a lot of unfounded criticism about the AI Act. Just last week, on a mainstream French economic program that usually brings in experts to explain complex issues, I watched a CEO from the so-called "digital sector" tear into the AI Act while actually discussing another regulation. The AI Act was used as THE example of a bad regulation made by the European Commission. The European Commission makes a ton of questionable regulations, but using the AI Act as THE example, really?!

The arguments were, if we can call them arguments, embarrassing:

- Regulation is bad. That's it

- The AI Act is 300 pages long. Who has time for that?

Apparently not the so called experts invited on this program. Also, the core text is only around 150 pages, so I don't know what 300-page document this guy was talking about.

His comments made it obvious he hadn't read the text. That wouldn't be such a big deal if he doesn't pretend to be an expert. He is knowledgeable about a lot of things, a lot more than me. But surely, if you want to criticize a regulation: first you need to research it, second you need to pinpoint what you don't like about it.

But here's the thing. There are plenty of people who have taken the time to break the Act down and explain it. In France, Cigref has already started publishing a comprehensive guide for companies looking to implement the AI Act. I am sure other entities countries have done the same. So if the original text is too much for you, please read the hundreds of other resources that break it down and simplify it.

So let's stop pretending this criticism is based on knowledge or good faith.

What's so terrible about the AI Act, exactly?

Behind this fear-mongering, why is the AI Act THE example for bad regulation?

- It bans AI social scoring. Yes, the AI Act draws a clear line by prohibiting systems that rank people's behavior like a dystopian loyalty program. Oh la la, how terrible it is to say no to mass surveillance and systemic discrimination

- It asks high-risk AI companies to document how they've trained their models. Oh la la, transparency? Quelle horreur! Apparently, asking for basic information from companies deploying powerful, decision-making systems is a step too far

- It requires documentation of security measures. Because clearly, no AI system has ever been hacked or mishandled confidential business or personal data

- It mandates basic AI governance. And that's somehow seen as an outrageous burden, rather than a minimal safeguard when deploying powerful tools in healthcare, finance, or education

- It was built with input from the industry. Critics can't make up their minds. Some say the industry had too much influence. Others act like it was excluded entirely. In reality, companies from startups to tech giants were at the table. That's how regulation should be shaped. Industry players have lobbied hard to be involved, and for once, we cannot criticize the EU for not listening

For most AI cases, the AI Act is mainly about making sure companies have basic AI governance. Frankly, who wants to buy AI services from a CEO who thinks that documenting how they use AI and how they make sure it is secure is so restrictive?

Why bad criticism is worse than no criticism

To be clear, serious critiques of the AI Act can exist. Not all of its provisions are perfect, and part of it is still too open to interpretation. We are still waiting for how each country will implement it. If it is done like the GDPR we might end up with different levels of restrictions. Also, I am sure some of its demands will be in some cases a bit too hard to implement for some types of companies. We are still waiting on the Harmonized Standards that should set a template to follow to guarantee conformity.

But this kind of lazy, blanket criticism is dangerous. It helps no one. If you're going to oppose the law, point to specific sections that need revision. Help improve the framework. Broad attacks with no substance do two things:

- They give regulators no feedback to work with

- And they mislead the public, who often haven't read the law either

If someone wants to argue that European companies should be allowed to build social scoring tools or use AI to manipulate workers with emotional surveillance, fine. Say it clearly. That way the public can decide if they agree.

The AI Act doesn't kill innovation, it helps it

Inspired by the AI Index, I recently wrote about the importance of the public being well informed. Most people haven't read the AI Act. So when someone goes on air and compares it to other bad decisions by Brussels, people assume the worst. They might think this law means prison time for building an AI that generates biased virtual birthday cards.

God knows the EU Commission made some bad decisions, like banning combustion cars in such a drastic way and with no strategy, that they are basically handing over the EU car market to China. But the AI Act, again, should not be THE example you provide.

This kind of rhetoric also implies that AI can exist without regulation. That's simply not true. Every country with a serious AI sector has regulations in place. In the US, several states have strict laws around deepfakes used in election fraud or non-consensual pornography. Deepfakes are a great example. Most existing laws don't cover them properly. So if we don't create new rules, we're basically inviting people to use this tech for the worst.

A smarter regulatory model that actually supports innovation

Critics also ignore the innovative, risk-based approach the EU has taken. The AI Act is designed to support innovation. It avoids fragmented national rules with one consistent framework across the EU. It also ensures low-risk applications aren't overregulated. While as I mentioned before, each country might still interpret parts of AI Act, it is still far better than each country drafting their own regulation. Imagine an AI startup having to navigate each country's AI regulation? If some CEOs, like the guy I previously mentioned, already find it hard to read 300 pages, imagine having to read 27x that number !

What more do people want? Apparently, they'd prefer a world where companies face no restrictions. Because when has a company ever done anything unethical for profit? Companies are known for all having one sole purpose: making society better. Of course.

The truth is, the AI Act is not an obstacle. It is a necessary structure. It aims to protect people and support trustworthy innovation. It also give companies a stable homogeneous regulatory framework, that is far from being too restrictive. The real problem isn't the law. It's the refusal of some to engage with it seriously.

2. Regulating AI as a Value Proposition

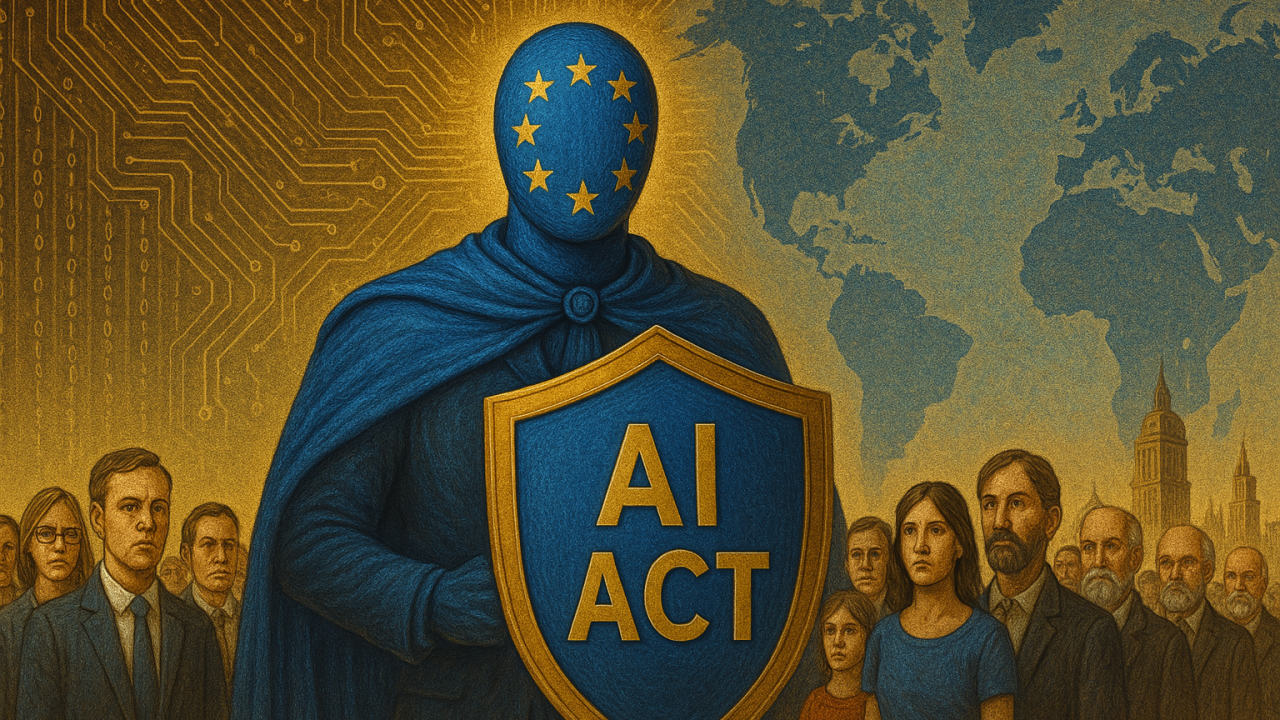

While some keep repeating that regulation kills innovation, others are actually building serious frameworks for what smart regulation could look like. One notable example is the report AI is Law by Digital New Deal, which makes a strong case for using the law not as a barrier but as strategic infrastructure. The report outlines a European vision of AI governance that supports innovation, protects rights, and strengthens digital sovereignty. Regulation, in this view, is not just about avoiding risks. It is a tool for enabling and scaling trust.

Instead of mimicking the chaos of the U.S. or the central control of China, the report proposes that Europe should use the law to define a third way. One that builds legitimacy into AI systems from the start and ensures that innovation aligns with public values.

The law is not the problem, it's the foundation

The report offers a clear proposal: law should function like a layered architecture that makes AI innovation acceptable and exportable. It uses a simple analogy, comparing legal design to a digital stack with three layers:

- Law as Infrastructure: embed fundamental rights directly into systems. These are not abstract values, but hard constraints. Privacy, non-discrimination, freedom of expression, and environmental responsibility should not be optional settings. They should be baked into how AI systems operate

- Law as a Platform: make regulation readable, interpretable, and usable by machines. This means encoding law into tools that developers can actually use. Imagine a platform where compliance can be tested in real time, and legal updates are version-controlled like software patches

- Law as a Service: ensure law can adapt to emerging use cases. AI agentification, for instance, raises new questions that existing frameworks cannot answer. This layer supports mechanisms like regulatory sandboxes and dynamic guidance, allowing the law to evolve without abandoning its core principles

This model does not pretend regulation is easy. It argues instead that we need to stop pretending innovation happens in a legal vacuum.

Legal clarity is not a constraint, it is a weapon

One of the key ideas in the report is that regulation provides legal capital. In other words, if a company can demonstrate compliance with a well-respected legal regime, that becomes a strategic asset. It makes their AI systems easier to scale, easier to export, and more resilient to public backlash. Legal clarity builds trust not just with regulators, but with users, partners, and governments.

The report also responds directly to those who claim regulation will push AI companies out of Europe. It points out that acceptability is a market condition. According to their research, 68 percent of people in France are afraid of AI, and 67 percent say regulation is necessary. You cannot grow an AI market if people do not want the technology near their children, their jobs, or their courts. Regulation is not in the way of adoption. It is what makes adoption possible.

Digital sovereignty requires legal sovereignty

Another major point is that legal sovereignty is a precondition for digital sovereignty. If Europe wants to have control over its technological future, it must be able to define and enforce its own legal standards. This is not just about keeping Big Tech in check. It is about avoiding dependency on foreign models and asserting democratic control over algorithmic systems. The report suggests that law should be seen as part of Europe's industrial policy. Not just about what we allow, but about what we choose to build.

It even proposes making code the 25th official language of the European Union, a symbolic but powerful way to signal that law and technology must speak to each other. That legal reasoning should be executable, and that systems should be designed with law in mind from the beginning.

The real value proposition

In the end, the report does not frame regulation as a nuisance. It presents it as a foundation for a European digital strategy. Regulation creates conditions for fair competition. It gives startups a clear path to market. It provides users with meaningful guarantees. And it gives the rest of the world a reason to trust European-made technology.

This is the value proposition: build AI systems that are not just powerful, but defensible. Systems that do not collapse under scrutiny. Systems that reflect democratic choices, not just technical capabilities.

If that sounds boring to the loudest voices in the room, so be it. The alternative is a race to the bottom. And Europe has chosen not to run in that direction.

Conclusion

The AI Act is not a perfect law, but it is a serious one. It tackles real risks, offers clear rules, and creates space for responsible innovation. Dismissing it with vague, uninformed criticism does nothing to improve it or help anyone understand what's really at stake.

Efforts like AI is Law show that regulation can be more than compliance. It can be a strategic asset. Europe doesn't need to choose between innovation and responsibility. It can lead with both.

If we want AI people can trust, then regulation is not the problem. It's part of the solution.